Think tanks need to differentiate

The Coronavirus pandemic caused many thousands of businesses to go bust. I know of only two think tanks (the EastWest Institute and Quilliam) that did so. Despite the significant growth in the sector (and so, you’d think, a number of perhaps less reliably funded think tanks), they don’t tend to die.

This is likely a feature of the business model, which for most Anglo-American think tanks is straightforward.

If the Anglo-American think tank market were an efficient market – and the market analogy is not one that all will agree with – those that fail to have policy influence (ie, achieve an outcome) would go out of business. Funding sources would divert their resources to those think tanks that had a track record of achieving impact. (And impact would be assessed by whether their policy intervention was successful by what it achieved, not simply by virtue of having been implemented).

This is also true at the more academic end of the spectrum (the tip of Fink’s pyramid, see Part 1). Universities in the UK, for example, are financially incentivised to align their research with real-world policy relevance.

The think tank market is not efficient, however. Largely because think tanks – like public relations firms, who similarly aim to influence public opinion and policy outcomes – have a distinct challenge in proving their real-world impact.

Put another way, it is hard to know if a think tank is any good at doing what it exists to do.

How to evaluate the impact of ideas?

Attribution modelling is the way marketeers assign value to different parts of their work by evaluating different parts of a campaign. It allows you to identify which output led to a specific outcome – did this specific event (output) change that policymaker’s mind which meant they drafted that policy in this particular way (outcome).

Despite much effort, there is no attribution model for influence, or the spread and penetration of ideas.

To be clear, this is not about evaluating the effect of policies themselves. Our work in policy evaluation applies different methodologies to estimate impact, and cause-and-effect relationships. The same econometrics do not provide a reliable insight into influence, however.

This is as relevant for think tanks as it is in my discipline: public relations and communications.

This is a key reason PR is often seen as the ‘third wheel’ of the communications disciplines when compared to marketing and advertising. The latter are able to point to data clearly showing immediate upticks in revenue based on their work (or not).

For example, it is relatively straightforward to track a user clicking on a social media post, reading a blog, being retargeted a few hours later, and returning to purchase a product. Using analytics tools, we can attribute the social media content, blog post, and retargeting as factors in the eventual sale. This data allows us to build an attribution model that helps us understand what works.

We are not able to track the ‘purchase’ of ideas in the same way, so instead our models are theoretical, and our monitoring and evaluation work largely focuses on tracking proxies.

At the higher end of the market, evaluation surveys are conducted. But even then, they remain proxies for success, and do not categorically confirm that the campaign you just ran actually caused the change you are presenting as your success.

If you compare this to being able to show that a user clicked an advert and moved to purchase a pair of socks within minutes, the relative challenge is clear. Those who work in influence rely on correlations and qualitative feedback to demonstrate impact. Those who work in digital marketing can prove causation.

This is particularly challenging in policy influence as it can take decades to get a policy proposal implemented.

I know the strength of public relations. I know from running countless experiments with Cast From Clay’s own communications that public relations can be far more potent than marketing when done right.

And I believe the same is true of think tanks and the power of ideas to evolve viewpoints and catalyse change.

But evaluating communications is difficult and the evaluation challenge is similar for think tanks:

How much of a relationship do you really have with that policymaker? Did you actually influence their thinking, or, as one British Shadow Minister put it to me, do they just use you when they want third-party support for something they already think? Does anyone read your reports?

This is not new.

“All too often, think tanks gauge their success in terms of public relations victories measured in inches of press coverage, rather than more meaningful and concrete accomplishments.”

Richard Fink

Little has changed since Fink wrote those words a quarter of a century ago.

Claims of influence are to a large extent anecdotal and reputational. Some make a show of it (as they should): the UK centre-right think tank Policy Exchange had a chart on their office wall that traced the path of policy ideas from their original idea through to its implementation. How much of that was down to them?

Quotes from leading politicians on website homepages help create the perception of influence, but the influence of many think tanks is much more subtle and impossible to track.

One of these is the ‘legitimator’, where the think tank has shifted the realms of what is considered socially permissible and thus allowed a specific policy idea to be pitched.

Or the ‘happenstance conversation’, in which the think tank isn’t necessarily the entity that is imposing the influence, but has acted as a catalyst for influence to take place.

The importance of think tank brands

The think tank business model is not built on a rigorous assessment of their abilities to exert policy influence (nor the quality of their policy recommendations), for the simple reason that this is very hard to prove and most think tanks do not invest in their evaluation capabilities.

The business model is built on the perception of their ability to influence – from policymakers to the media to the engaged public. It is the perception of their brand and those individuals that fly under its flag that will deliver think tanks funding opportunities, and opportunities for influence.

Despite this, many think tanks remain undifferentiated: they retain generalist positioning statements – describing themselves as the conductors of non-partisan and objective research – that fail to set them apart from their peers. They haven’t done the work needed to invest in their brand and set themselves apart.

There are several that buck this trend. The Cato Institute has a clear values-focused positioning. The Information Technology & Innovation Foundation has a clear thematic-focused positioning.

The perfect think tank has both: a clearly articulated value set, and a clearly articulated area of expertise.

Very few think tanks have both, even the most well regarded. Consider this mission statement. Completely accurate, yet indistinct and all-encompassing at the same time:

Our mission is to conduct in-depth research that leads to new ideas for solving problems facing society at the local, national and global level.

How many think tanks can stand behind this statement? Many.

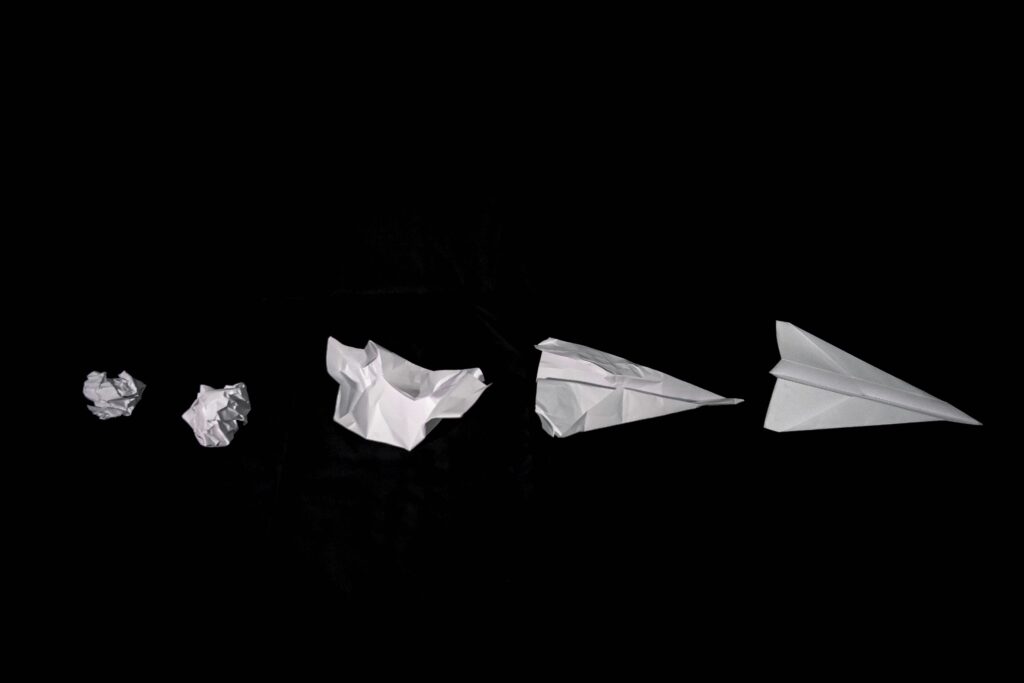

So, in short: